Squeeze and Learn: Compressing Long Sequences with Fourier Transformers for Gene Expression Prediction

Abstract

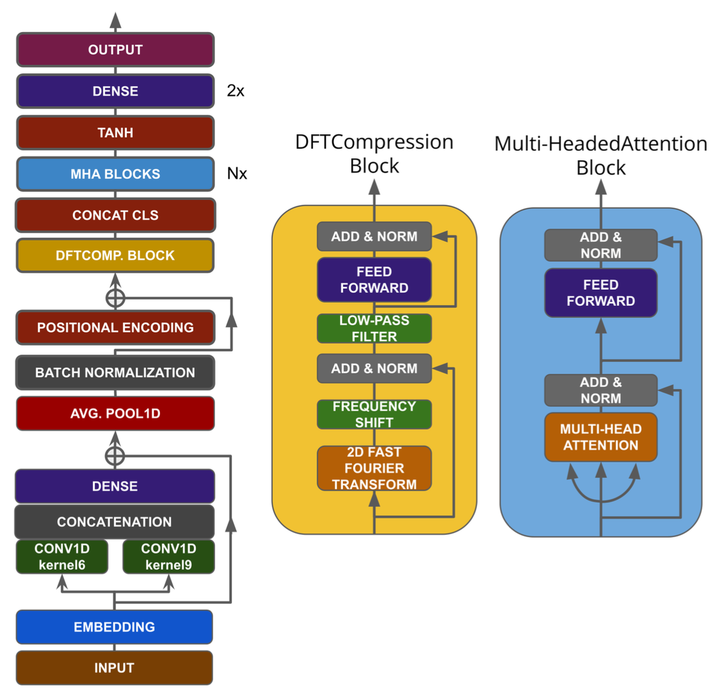

Genes regulate fundamental processes in living cells, such as the synthesis of proteins or other functional molecules. Studying gene expression is hence crucial for both diagnostic and therapeutic purposes. State-of-the-art Deep Learning techniques such as Xpresso have proposed to predict gene expression from raw DNA sequences. However, DNA sequences challenge computational approaches because of their length, typically in the order of the thousands, and sparsity, requiring models to capture both short- and long-range dependencies. Indeed, the application of recent techniques like transformers is prohibitive with common hardware resources. This paper proposes FNetCompression, a novel gene-expression prediction method. Crucially, FNetCompression combines Convolutional encoders and memory-efficient Transformers to compress the sequence up to 95% with minimal performance tradeoff. Experiments on the Xpresso dataset show that FNetCompression outscores our baselines and the margin is statistically significant. Moreover, FNetCompression is 88% faster than a classical transformer-based architecture with minimal performance tradeoff.